Translating high-precision mixed reality navigation from lab to operating room: design and clinical evaluation | BMC Surgery

Components and technical principles

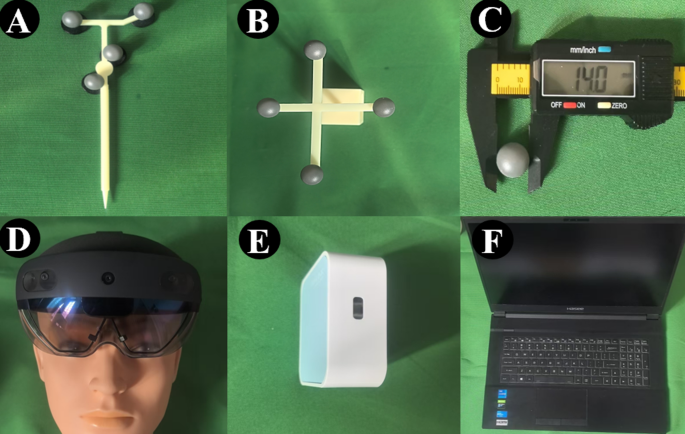

MRN comprises two main components: a HoloLens device for intraoperative visualization and spatial perception, and a PC workstation connected via a local network to manage content control, including model import and transparency adjustment. Two custom-designed rigid 3D-printed tools are incorporated: a handheld probe for high-frequency movement and a patient tracking tool, affixed to the head clamp, for low-frequency monitoring. Both tools are equipped with four 14 mm reflective spheres, which are fabricated from the same material as those used in TON, coated with microbeads, enabling robust infrared tracking(Fig. 1).Using the HoloLens depth camera, the MRN system captures reflected signals, which are processed in real time through the OpenCV library [13, 14]. The patient tracking tool is updated at 5-second intervals to account for minimal head motion (constrained by a surgical frame), whereas the probe is continuously tracked to reflect frequent intraoperative adjustments. For spatial registration between the virtual model and the actual patient, we employ a point-based approach using anatomical landmarks. The Kabsch algorithm is applied to compute optimal rigid transformation, enabling accurate alignment between virtual and physical spaces [15].

Components of the MRN. A Handheld probe (high-frequency tool). B Patient tracking tool (low-frequency tool). C Localization marker sphere (14 mm diameter). D Microsoft HoloLens headset. E Portable router for connectivity. F Laptop computer

Laboratory tests

To validate the performance of the MRN system, laboratory testing was conducted, focusing on probe tracking stability, the registration error between corresponding fiducial points on the virtual model and those on the physical head—commonly referred to as FRE, and the precision of target localization, also known as TRE. This experimental framework was developed with reference to the ASTM F2554-22 standard( which provides recommended practices for measuring positional accuracy in computer-assisted surgical systems.

Virtual model spatial registration assessment

Ten simulated head models were designed: Each model was randomly assigned an identification number. On each model, 14 randomly numbered 3-mm markers were placed; four non-coplanar markers were used for spatial registration to align virtual and physical models, with the remaining ten serving as targets for accuracy evaluation. CT scans of each head model were performed to obtain imaging data, and 3D reconstruction of virtual models was performed using the software 3D Slicer (Version 5.8; [16].

Five neurosurgeons with no prior experience using the MRN system underwent a 20-minute training session before independently performing spatial registration tasks. For each trial, a head model was randomly selected, and a different set of four fiducial points was randomly chosen on its surface. Post-registration accuracy was evaluated using the root mean square error (RMSE) of Euclidean distances between corresponding points, termed the FRE. Each model underwent three registration trials per surgeon, and the mean FRE across the three trials was recorded as the final spatial registration error for that surgeon. In addition to accuracy, the total time required by each surgeon to complete the localization of all fiducial points was also recorded to assess the learning curve and task familiarity over repeated trials.

Target localization precision assessment

TRE evaluates the error for non-fiducial target points (i.e., the remaining ten markers per model not used for registration). Two neurosurgeons independently performed the localization task on each model, repeating the process three times. The mean TRE across these trials was used as the final metric of target localization accuracy.

After spatial registration, the probe tool localized ten marker points guided by MRN. Localization error was calculated as the Euclidean distance between actual and true points. Each marker point was localized three times, and the mean value was taken as the localization accuracy for that point. All measurements and data recording were conducted by an individual proficient to ensure the objectivity and accuracy of the data.

Stable tracking via associated reference(STaR)

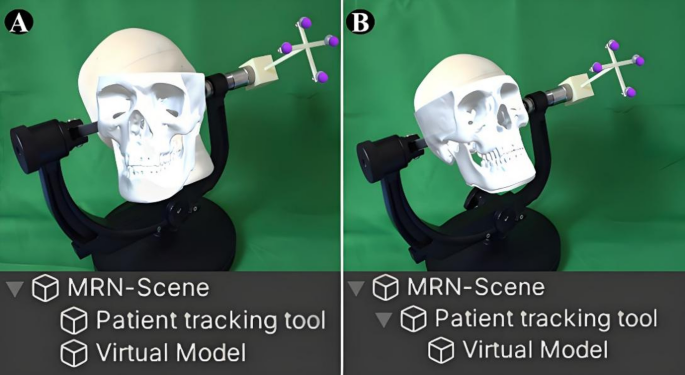

Spatial recognition stability in MRN relies on the simultaneous localization and mapping (SLAM) algorithm in the HoloLens, which performs environment-based localization by detecting and matching spatial features [17]. However, in real surgical settings, the patient’s head is typically covered by sterile drapes, which obscure key spatial features. This may lead to misalignment or unintended displacement of the virtual model during the procedure. We designed a STaR approach to reduce virtual model drift under these conditions. First, the patient’s head is secured with a head clamp, to which the patient tracking tool is rigidly attached. Because the head, clamp, and tracking tool form a fixed structure, any movement of the tool directly reflects head motion. After spatial registration, the virtual model is automatically set as a child node of the tracking tool. From this point on, the model’s position no longer relies on SLAM-based localization but instead follows the movement of the patient tracking tool, maintaining a constant relative position (Fig. 2).To reduce jitter from minor tracking noise or hand tremors, we implemented a threshold-based update strategy. The system checks the tracking tool’s position at low frequency (e.g., every 5 s) and updates the model only if the displacement exceeds 5 mm (adjustable). Otherwise, the model remains static, improving visual stability during navigation (Video 1).

Schematic illustration of the STaR method. A Without STaR: The virtual model and patient tracking tool are independent. During surgery, head rotation or occlusion by sterile drapes leads to tracking loss and model misalignment. B With STaR: The virtual model is hierarchically linked to the patient tracking tool and maintains a fixed spatial relationship. Any movement of the tool (e.g., head rotation) results in synchronized movement of the virtual model, preserving registration stability

Clinical tests

Since scalp-mounted fiducial markers may shift or detach between imaging and surgery, affecting accuracy, this study used TON system as the reference. Identical target points were defined via spatial interpolation, and both MRN and TON were used for localization. The Euclidean distance between corresponding points was measured to assess MRN’s accuracy and reliability in surgical settings.

Patient selection

Inclusion Criteria: Patients clinically diagnosed with a need for neurosurgery and suitable for navigation-assisted procedures; preoperative CT or MRI showing clear lesion boundaries; age > 18 years; voluntary participation by the patient or their legal guardian with signed informed consent.

Exclusion Criteria: Imaging indicating diffuse lesion growth; severe cardiopulmonary dysfunction rendering surgery intolerable; presence of other serious comorbidities potentially affecting surgical outcomes.

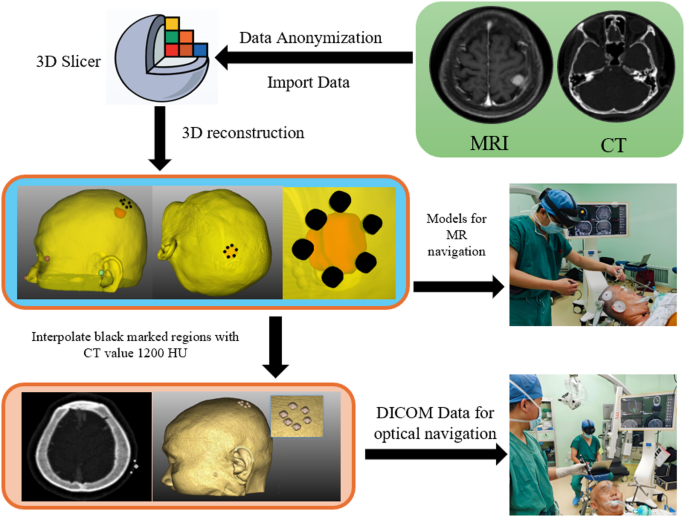

Data processing

Anonymized preoperative CT or MRI imaging data were imported into 3D Slicer to reconstruct 3D models of the skin, lesion, vasculature, and registration points based on lesion-specific anatomical features. Using spatial interpolation, the scalp boundary corresponding to the lesion’s surface projection was defined, and 4–6 uniformly distributed target points (3 mm in diameter) were selected along this boundary. When connected, these points formed a closed contour representing the lesion’s scalp projection, which served as a reference for surgical incision planning. The processed data were exported as digital imaging and communications in medicine (DICOM) files for use in optical navigation and as 3D surface models for integration with the MRN system [18] (Fig. 3).

Image processing workflow. Import the data into 3D Slicer. For multimodal imaging, first perform registration. Then, reconstruct the lesion in 3D and mark its projection on the scalp. Around the projection boundary, set sphere spatial interpolation as the surgical localization target. Finally, export the updated DICOM images

Surgical procedure

At each of the two hospitals, two neurosurgeons—one experienced with the MRN and the other with the TON—independently performed spatial registration and target localization. Considering that during MRN localization the surgeon directly viewed the patient’s scalp, whereas during TON localization the surgeon relied on a monitor, we designed the protocol to perform MRN localization first to minimize potential bias or interference with the subsequent TON localization. Additionally, the skin markings made after MRN localization were kept minimal to further reduce any impact on the TON measurements. Markers were erased, and the head was rotated (± 10°) to mimic intraoperative shifts, ensuring that the induced motion exceeded the intrinsic angular error range of the MR system [19].They repeated localization without re-registration to test MRN’s feasibility and accuracy. The actual surgery proceeded using TON-based localization.

Sample size calculation

The sample size was based on a paired comparison of TRE between MRN and TON. Preliminary data suggested a mean difference of 2 mm and a standard deviation of 3 mm. Using a two-tailed paired t-test (α = 0.05, power = 90%), at least 26 patients were needed. The calculation was performed with the TTestPower module in Python (v 3.12). To account for potential dropouts, we aimed to enroll at least 28 patients.

Human ethics and consent to participate

All patients or their family members provided written informed consent for surgery and participation in this study. Prior to enrollment, participants were fully informed about the experimental nature of the MRN, including its potential benefits, risks, and available standard treatment options. Importantly, it was clearly explained that the actual surgical procedures would be performed using TON as the standard. Detailed oral and written information was provided to ensure patients understood that the MRN was under investigation and that their participation was entirely voluntary, with the option to withdraw at any time without affecting their medical care. Consent was also obtained for the publication of any identifiable images. All procedures involving human participants were conducted in accordance with the ethical standards of the institutional research committees and the 1964 Helsinki Declaration and its later amendments or comparable ethical standards. This study was approved by the Ethics Committee of the First Affiliated Hospital of Xiamen University (No. [2021]038) and the Ethics Committee of Jiangmen Central Hospital (No. [2021]17).

Statistical analysis

Statistical analyses were performed using Python 3.12. Data processing and visualization were conducted with the Pandas and Matplotlib libraries. In the laboratory study, inter-doctor comparisons of testing time, FRE, and TRE were evaluated using the Kruskal–Wallis non-parametric test. In the clinical study, descriptive statistics—including mean ± standard deviation, range, and 95% confidence intervals—were calculated for navigation time, FRE, and TRE. Paired t-tests were applied to compare navigation time between the MRN and traditional optical navigation (TON) systems, as well as to assess differences in MRN localization accuracy before and after changes in head position. Bland–Altman analysis was used to evaluate agreement between preoperative and intraoperative TRE measurements. A two-sided p-value of less than 0.05 was considered statistically significant.

link

:max_bytes(150000):strip_icc()/GettyImages-1239959628-1992ec9ca1194bd082e13488a44a8916.jpg)

:max_bytes(150000):strip_icc()/GettyImages-2196178842-b558c6c2141b4b8a87412d4860b78fcf.jpg)